This post continues the journey of creating a

dotnet application,

containerizing and ultimately deploying the image to production.

The first thing we need to do is to get the source into a source repository (I’m of course going to use

VSTS), then we need to configure a build and then push the images to a registry. We will then be able to deploy the images from the registry to our hosts, but more on that later.

Note: Some of these steps may incur some cost, so I would highly recommend at the very least creating a

Dev Essentials account. This should cover any costs while we are playing.

I’m assuming you have already pushed your code to a repository in VSTS, so the next step is to create an

Azure account, if you have not got one already, and then to setup a container registry.

To create your own private azure container registry to publish the images to:

- Login to azure

- Select container registries

- This should give you a list and you will need to click “add” to create a new container registry

- Fill in the required details and create a new registry

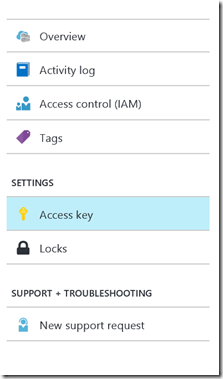

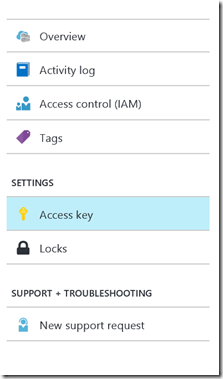

- Once created, open up the blade and select the Access Key settings. This should contain the registry name, login server and user name and password details (make sure the “Admin User” is enabled)

Now lets move on to VSTS.

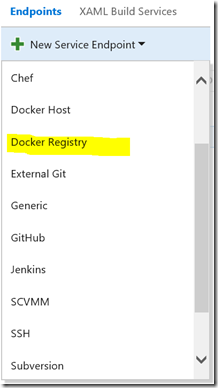

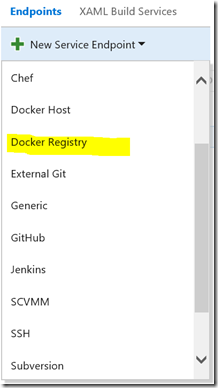

First we need to “connect” VSTS and your container registry:

- Login to your VSTS project and under settings, select the services configuration:

- Using the details that were in the Access Key settings on the Azure container registry blade, create a docker registry service with your “Login Server” as the docker registry url and the user name and password:

Finally it is time to create the builds. As you would expect, go add a new “empty” build definition that links to your source repository. Instead of selecting the “Hosted” build queue, use the “Hosted Linux Preview” queue. Docker is not available on the normal hosted windows agents yet.

Add 2 command line tasks and 3 docker tasks:

Note

Note: If you do not have the docker tasks, then you will need to go and install them from the

market place

Now configure the tasks as follows:

| Command Line 1 | Tool: dotnet

Arguments: restore

Advanced/Working Folder : The folder that your source is located in. In my case it was $(build.sourcesdirectory)/dotnet_sample/ |

| Command Line 2 | Tool: dotnet

Arguments : publish -c release -o $(build.sourcesdirectory)/dotnet_sample/output/ or an "output" folder under your source location

Advanced/Working Folder : see above |

| Docker 1 | Docker Registry Connection : the service connection that you created earlier

Action : Build an image

Docker File : The location of your docker file. In my case it was $(build.sourcesdirectory)/dotnet_sample/dockerfile

Build Context: The location of your source code. In my case $(build.sourcesdirectory)/dotnet_sample

Image Name: The name and tag that you want to give your image. In my case I just used dotnet_sample:$(Build.BuildId)

Advanced/Working Folder : same as the other working folders |

| Docker 2 | Docker Registry Connection : the service connection that you created earlier

Action : Run a Docker Command

Command : tag dotnet_sample:$(Build.BuildId) $(DockerRegistryUrl)/sample/dotnet_sample:$(Build.BuildId) the name must be the same as in the task above, and the $(DockerRegistryUrl) must be your Azure container registry url or login server

Advanced/Working Folder : same as the other working folders |

| Docker 3 | Docker Registry Connection : the service connection that you created earlier

Action : Push an image

Image Name : The name you passed in when tagging your container above. In my case it was $(DockerRegistryUrl)/sample/dotnet_sample:$(Build.BuildId)

Advanced/Working Folder : same as the other working folders |

Now you can save and queue the build. Hopefully it will look something like this:

If all has passed, a quick and easy way to see if your image is in your registry is to navigate to your docker registry’s catalogue url : “https://<<registry_url>>/v2/_catalog”. This will likely prompt you to login with the username and password that you setup previously and then you will download a json file. Opening this file will provide you with all the images hosted in your registry.

In this post we have moved from a locally created image to one residing in our private registry. In the next post we will continue the journey a bit further…